by

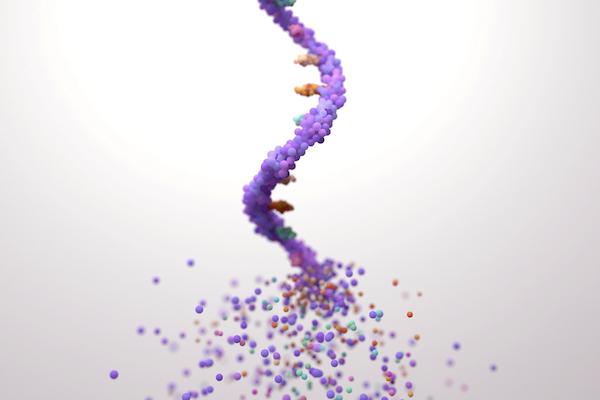

In a typical foundry, raw materials like steel and copper are melted down and poured into molds to assume new shapes and functions. The U.S. National Science Foundation Artificial Intelligence-driven RNA Foundry (NSF AIRFoundry), led by the University of Pennsylvania and the University of Puerto Rico and supported by an $18-million, six-year grant, will serve much the same purpose, only instead of smithing metal, the “BioFoundry” will create molecules and nanoparticles.

NSF AIRFoundry is one of five newly created BioFoundries, each of which will have a different focus. Bringing together researchers from Penn Engineering, Penn Medicine’s Institute for RNA Innovation, the University of Puerto Rico–Mayagüez (UPR-M), Drexel University, the Children’s Hospital of Philadelphia (CHOP) and InfiniFluidics, the facility, which will be physically located in West Philadelphia and at UPR-M, will focus on ribonucleic acid (RNA), the tiny molecule essential to genetic expression and protein synthesis that played a key role in the COVID-19 vaccines and saved tens of millions of lives.

The facility will use AI to design, optimize and synthesize RNA and delivery vehicles by augmenting human expertise, enabling rapid iterative experimentation, and providing predictive models and automated workflows to accelerate discovery and innovation.

“With NSF AIRFoundry, we are creating a hub for innovation in RNA technology that will empower scientists to tackle some of the world’s biggest challenges, from health care to environmental sustainability,” says Daeyeon Lee, Russell Pearce and Elizabeth Crimian Heuer Professor in Chemical and Biomolecular Engineering in Penn Engineering and NSF AIRFoundry’s director.

“Our goal is to make cutting-edge RNA research accessible to a broad scientific community beyond the health care sector, accelerating basic research and discoveries that can lead to new treatments, improved crops and more resilient ecosystems,” adds Nobel laureate Drew Weissman, Roberts Family Professor in Vaccine Research in Penn Medicine, Director of the Penn Institute for RNA Innovation and NSF AIRFoundry’s senior associate director.

The facility will catalyze new innovations in the field by leveraging artificial intelligence (AI). AI has already shown great promise in drug discovery, poring over vast amounts of data to find hidden patterns. “By integrating artificial intelligence and advanced manufacturing techniques, the NSF AIRFoundry will revolutionize how we design and produce RNA-based solutions,” says David Issadore, Professor in Bioengineering and in Electrical and Systems Engineering at Penn Engineering and the facility’s associate director of research coordination.

Read the full story on the Penn AI website.